Tag: software (Atom feed)

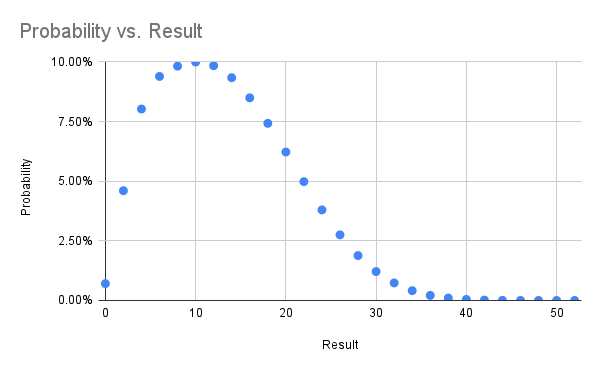

Outcome Probability for One Handed Solitaire

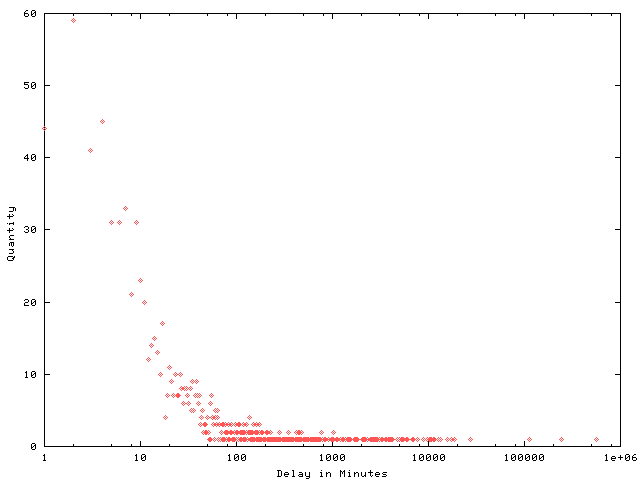

Back in 1994 my circle of high school friends spent a lot of time sitting around talking (there were no cell phones) and for about a week we were all playing one handed solitaire. In suburban St. Louis we called it idiot's delight solitaire (which turns out to be an entirely different game), because there is absolutely no human input after the shuffle. As soon as you've started playing it's already determined whether you've won -- you just spend five minutes learning if you did.

Naturally we wondered how likely our very rare wins were, and being a computer nerd back then too I wrote a Pascal(!) program to simulate the game and arrived at the conclusion you win one in every 142 games.

Now thirty years later I've taught the game to the eleven year old in our home, who is just game back from a phone-free summer camp with a deck of cards and dubious shuffling skills.

My old Pascal is lost to bitrot, but the game as python, using a sort of janky off the shelf deck_of_cards module is trivial:

def play():

deck = deck_of_cards.DeckOfCards()

hand = []

while not deck._deck_empty():

hand.append(deck.give_random_card())

while len(hand) > 3:

if hand[-1].suit == hand[-4].suit:

del hand[-3:-1]

elif hand[-1].rank == hand[-4].rank:

del hand[-4:]

else:

break

return len(hand)

On the 486 I was running at the time I recall getting ten thousand or so runs over many days. On a tiny Linode in the modern era I got 50 million runs in 15ish hours.

I've gathered the results in a spreadsheet and included some probability graphs below. In trying to find the real name of this game I came across a previous analysis from 2014 which comes to the same overall probability. That blog post is now down (hence the archive.org links) with ominous messages telling folks the author is definitely no longer thinking about this game.

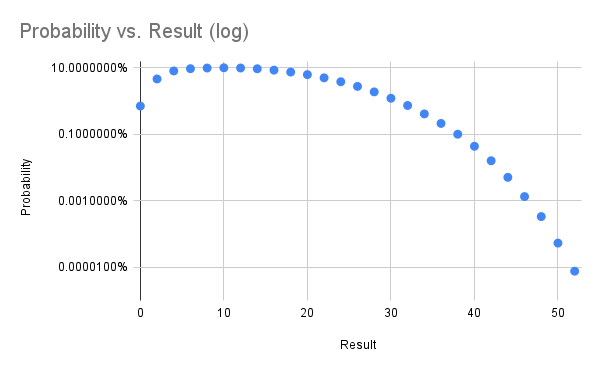

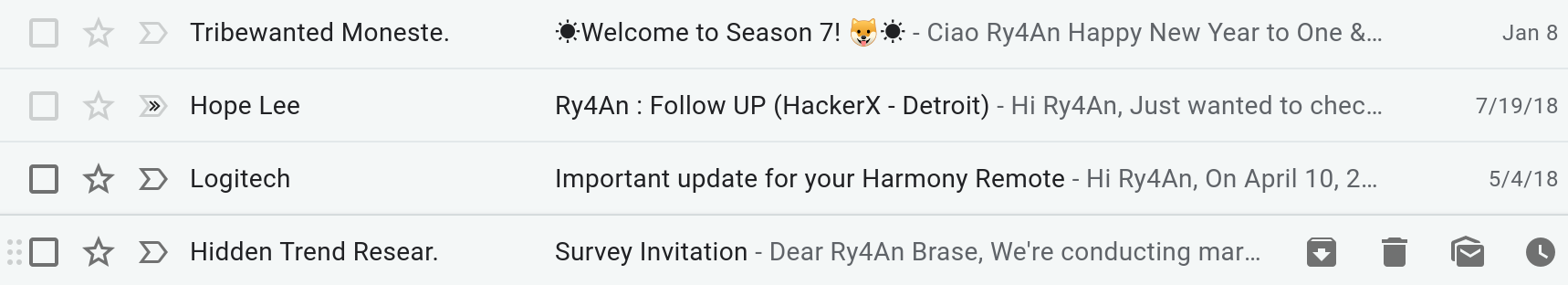

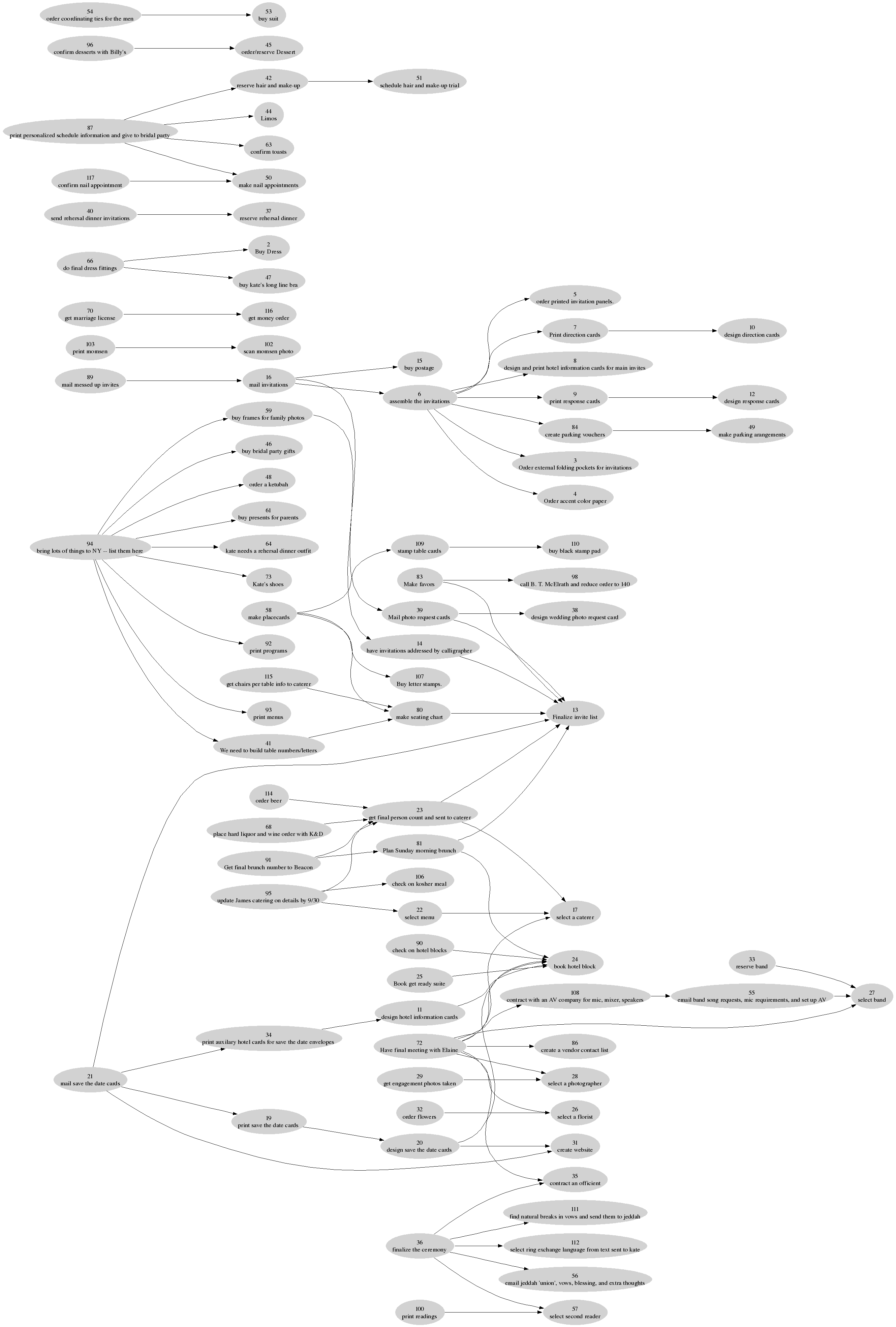

A Gamebook Report with Graphviz, Google Sheets, Python, and Juypter/Colab

An 11 year old in our house needed to do a book report for school in the form of a board game and selected a gamebook, apparently the generic name for the trademarked Choose Your Own Adventure books. The non-linear narrative made the choice of board layout easy -- just use the graph of pages-transitions ("Turn to page 110").

The graphviz library is always my first choice when I want to visualize nodes and edges, and the python graphviz module provides a convenient way to get data into a renderable graph structure.

I wanted to work with the 11 year old as much as possible, so I picked a programming environment that can be used anywhere, jupyter notebooks, and we ran it in Google's free hosted version called colab.

The data entry was going to be the most time consuming part of the project, and something we wanted to be able to work on both together and apart. For that I picked a Google sheet. It gave us the right mix of ease of entry, remote collaboration, and a familiar interface. Python can read from Google sheets directly using the gspread module, saving a transcription/import step.

It took us a few weeks of evenings to enter the book's info into the data spreadsheet. The two types of data we needed were places, essentially nodes, and decisions, which are edges. For every place we recorded starting page, a description of what happens, and the page where you next make a decision or reach an ending. For every decision we recorded the page where you were deciding, a description of the choice, and the page to which you'd go next. As you can see in the data spreadsheet that was 139 places/nodes and 177 decisions/edges.

Once we'd entered all the data we were able to run a short python program to load the data from the spreadsheet, transform it into a graph object, and then render that graph as a pdf file. That we printed with a large format printer, and then the 11 year old layered on art, puzzles, rules, and everything else that turns a digraph into a playable game. The final game board is shown below, with a zoomed section in the album.

One interesting thing about this particular book that was only evident once the full graph was in front of us was that the very first choice in the book splits you into one of two trees that never reconnect. Lots of later choices in the book loop back and cross over, but that first choice splits you into one of two separate books.

I've omitted the title and author info from the book to stop this giant spoiler from showing up on google searches, but the 11 year old assures me it was a good, fun read and recommends it.

Apache To CloudFront With Lambda At Edge

I've been running my (this) vanity website and mail server on Linux machines I administer myself since 1998 or so, but it's time to rebuild the machine and hosting static HTTPS no longer makes sense in a world where GitHub or AWS can handle it flexibly and reliably for effectively free. I did want to keep running my own mail server, but centralization in email has made delivery iffy and everyone I'm communicating with is on gmail, so the data is going there anyway.

Because I've redone the ry4an.org website so many times and because cool URLs don't change I have a lot of redirects from old locations to new locations. With apache I grossly abused mod_rewrite to pattern match old URLs and transform them into new ones. No modern hosting provider is going to run apache, especially with mod_rewrite enabled, so I needed to rebuild the rules for whatever hosting option I picked.

Github.io won't do real redirects (only meta refresh tags), so that was right out. AWS's S3 lets you configure redirects using either a custom x-amz-website-redirect-location header on a placeholder object in the S3 bucket or some hoary XML routing rules at the bucket level, but neither of those allow anything more complicated than key prefix matching.

AWS's content delivery edge network, CloudFront, doesn't host content or generate redirects -- it's just a caching proxy --, but it lets you deploy javascript functions directly to the edge nodes which can modify requests and responses on their way in and out. With this Lambda at Edge capability you're restricted to specific releases of only the javascript runtime, but that's enough to get full regular expression matching with group extraction.

Ry4an in Title Case

Python has a uniquely bad title case function which turns my already silly name into Ry4An, capitalizing the 'a' because it follows a non-letter character. I can't be sure that all the bulk email I get that's sent to Ry4An Brase has passed through Python's .title() function, but I've not found another language or framework with so bad an implementation.

At least Python warns you that their version is terrible right in the docstring for title and provides a slightly better one they suggest you paste directly into your code. There are, of course, better versions available in libraries like titlecase which handle things like not capitalizing articles.

Other languages seem to avoid the fussiness of title case requirements by omitting it from the core language entirely (ruby, java), leaving it to third party implementors like rails and apache commons.

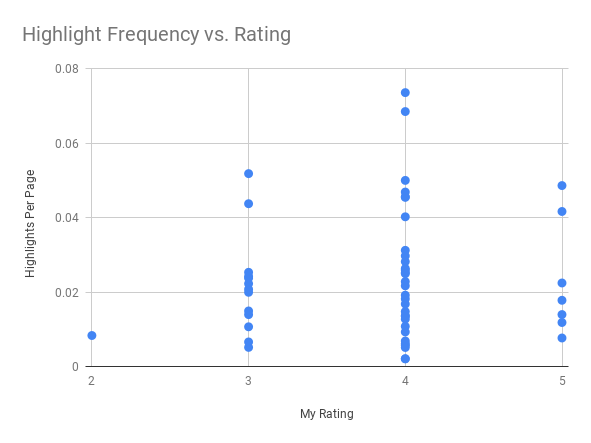

Kindle Highlights and Ratings

When reading I've always underlined sentences that make me happy. Once the kids got old enough to understand there's no email or fun on a Kindle I switched from dead tree books, and now the underlining is stored in Amazon's datacenters.

After a few years of highlighting on Kindle I started to wonder if the number of sentences that I liked and the eventual five-star scale rating I gave a book had any correlation. Amazon owns Goodreads and Kindle services sync data into Goodreads, but unfortunately highlight data isn't available through any API.

I was able to put together a little Python to scrape the highlight counts per book (yay, BeautifulSoup) and combine it with page count and rating info from the goodreads APIs. Our family scientist explained "the statistical tests to compare values of a continuous variable across levels of an ordinal variable", and there was no meaningful relationship. Still it makes a nice picture:

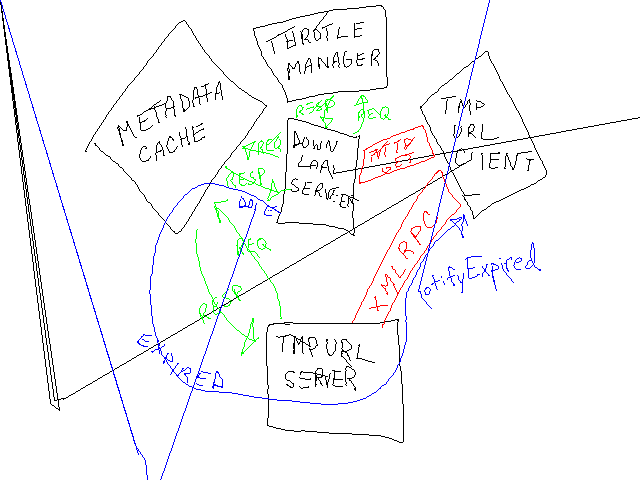

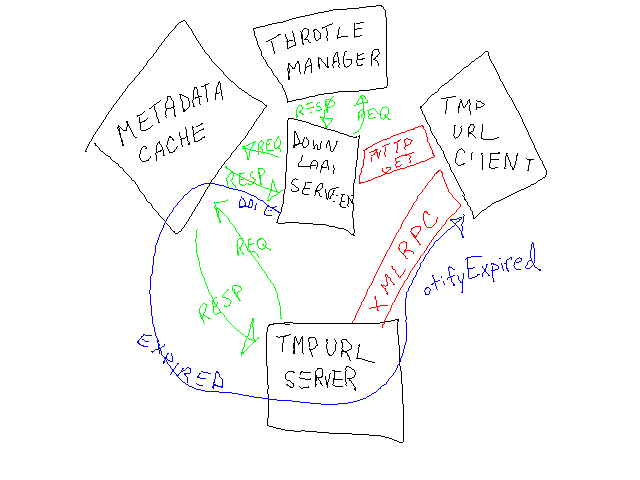

Home Alarm Analytics With AWS Kinesis

Home security system projects are fun because everything about them screams "1980s legacy hardware design". Nowhere else in the modern tech landscape does one program by typing in a three digit memory address and then entering byte values on a numeric keypad. There's no enter-key -- you fill the memory address. There's no display -- just eight LEDs that will show you a byte at a time, and you hope it's the address you think it is. Arduinos and the like are great for hobby fun, but these are real working systems whose core configuration you enter byte by byte.

The feature set reveals 30 years of crazy product requirements. You can just picture the well-meaning sales person who sold a non-existent feature to a huge potential customer, resulting in the boolean setting that lives at address 017 bit 4 and whose description in the manual is:

ON: The double hit feature will be enabled. Two violations of the same zone within the Cross Zone Timer will be considered a valid Police Code or Cross Zone Event. The system will report the event and log it to the event buffer. OFF: Two alarms from the same zone is not a valid Police Code or Cross Zone Event

I've built out alarm systems for three different homes now, and while occasionally frustrating it's always a satisfying project. This most recent time I wanted an event log larger than the 512 events I can view a byte at a time. The central dispatch service I use will sell me back my event log in a horrid web interface, but I wanted something programmatically accessible and ideally including constant status.

The hardware side of the solution came in the form of the Alarm Decoder from Nu Tech. It translates alarm panel keypad bus events into events on an RS-232 serial bus. That I'm feeding into a Raspberry Pi. From there the alarmdecoder package on PyPI lets me get at decoded events as Python objects. But, I wanted those in a real datastore.

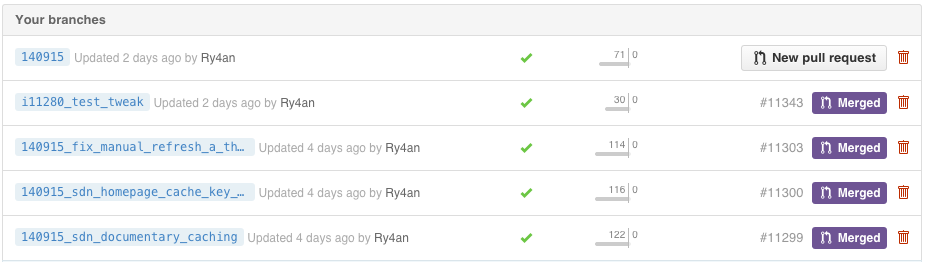

Pylint To Github

I spent a few hours trying to get the Jenkins Git & Github plugins to:

- run pylint on all remote branch heads that:

- arent' too old

- haven't already had pylint run on them

- send the repo status back to GitHub

I'm sure it's possible, but the Jenkins Git plugin doesn't like a single build to operate on multiple revisions. The repo statuses weren't posting, the wrong branches were getting built, and it was easier to write a quick script.

Now whenever someone pushes code at DramaFever pylint does its thing, and their most recent commit gets a green checkmark or a red cross. If/when they open a PR the status is already ready on the PR and warns folks not to merge it if pylint is going to fail the build. They can keep heaping on commits until the PR goes green.

I run it from Jenkins triggerd by a GitHub push hook, but it's setup so that even running it from cron on the minute is safe for those without a CI server yet.

Bitcoin Conversion In Google Spreadsheets

I've been using Charlie Lee's excellent Google Spreadsheet Bitcoin tracker sheet for awhile but it pulls data from a single exchange at a time and relies on the ordering of those exchanges on the bitcoinwatch.com site, which vary with volume.

I figured out I could get better numbers more reliably from bitcoinaverage.com, which (predictably) averages multiple exchanges over various time periods. They offer a great JSON API, but unfortunately Google spreadsheets only export JSON -- they don't have a function for importing it.

None the less I was able to fake it using a regex. You can pull the 24 hour average price in with this forumla:

=regexextract(index(importdata("https://api.bitcoinaverage.com/ticker/USD"),2,1), ": (.*),")+0

If you want that to update live (not just when you open the spreadsheet) you need to use Charlie's hack to get the sheet to think the formula depends on live stock data:

=regexextract(index(importdata("https://api.bitcoinaverage.com/ticker/USD?workaround="&INT(

NOW()*1E3)&REPT(GoogleFinance("GOOG");0)),2,1), ": (.*),")+0

I've put together a sample spreadsheet based on Charlie's.

Occuped: Twine + Go + App Engine

In our NY office We've got 40 people working in a space with two bathrooms. Walking to the bathrooms, finding them both occupied, and grabbing a snack instead is a regular occurrence. For a lark I took a Twine with the breakout board and a few magnetic switches and connected them to the over taxed bathroom doors.

The good folks at Twine will invoke a web hook on state change, so I created a tiny webapp in Go that takes the GET from Twine and stashes it in the App Engine datastore. I wrote a cheesy web front end to show the current state based on the most recent change. It also exposes a JSON API, allowing my excellent coworkers to build a native OS X menulet and a much nicer web version.

Amazon S3 as Append Only Datastore

As a hack, when I need an append-only datastore with no authentication or validation, I use Amazon S3. S3 is usually a read-only service from the unauthenticated web client's point of view, but if you enable access logging to a bucket you get full-query-parameter URLs recorded in a text file for GETs that can come from a form's action or via XHR.

There aren't a lot of internet-safe append-only datastores out there. All my favorite noSQL solutions divide permissions into read and/or write, where write includes delete. SQL databases let you grant an account insert without update or delete, but still none suggest letting them listen on a port that's open to the world.

This is a bummer because there are plenty of use cases when you want unauthenticated client-side code to add entries to a datastore, but not read or modify them: analytics gathering, polls, guest books, etc. Instead you end up with a bit of server side code that does little more than relay the insert to the datastore using over-privileged authentication credentials that you couldn't put in the client.

To play with this, first, create a file named vote.json in a bucket with contents like {"recorded": true}, make it world readable, set Cache-Control to max-age=0,no-cache and Content-Type to application/json. Now when a browser does a GET to that file's https URL, which looks like a real API endpoint, there's a record in the bucket's log that looks something like:

aaceee29e646cc912a0c2052aaceee29e646cc912a0c2052aaceee29e646cc91 bucketname [31/Jan/2013:18:37:13 +0000] 96.126.104.189 - 289335FAF3AD11B1 REST.GET.OBJECT vote.json "GET /bucketname/vote.json?arg=val&arg2=val2 HTTP/1.1" 200 - 12 12 9 8 "-" "lwp-request/6.03 libwww-perl/6.03" -

The full format is described by Amazon, but with client IP and user agent you have enough data for basic ballot box stuffing detection, and you can parse and tally the query arguments with two lines of your favorite scripting language.

This scheme is especially great for analytics gathering because and one never has to worry about full log on disks, load balancers, backed up queues, or unresponsive data collection servers. When you're ready to process the data it's already on S3 near EC2, AWS Data Pipeline or Elastic MapReduce. Plus, S3 has better uptime than anything else Amazon offers, so even if your app is down you're probably recording the failed usage attempts.

Creating Burn Down Charts for GitHub Repositories Using Google Apps Script

At DramaFever I got folks to buy into to burn down charts as the daily display for our weekly sprints with the rotating release person being responsible for updating a Google spreadsheet with each day's end-of-day open and closed issue counts.

It works fine, and it's only a small time burden, but if one forgets there's no good way to get the previous day's counts. Naturally I looked to automation, and GitHub has an excellent API. My usual take on something like this would be to have cron trigger a script that:

- polls the GitHub API

- extracts the counts

- writes them to some data store

- builds today's chart from the historical data in the datastore

That could be easily done in bash, Python, or Perl, but it's the sort of thing that's inevitably brittle. It's too ad hoc to run on a prod server, so it'll run from a dev shell box, which will be down, or disk full, or rebuilt w/o cron migration or any of the million other ailments that befall non-prod servers.

Looking for something different I tried Google Apps Script for the first time, and it came out very well.

GitHub Jenkins Deploy Keys Config

GitHub doesn't let you use the same deploy key for multiple repositories within a single organziation, so you have to either (a) manage multiple keys, (b) create a non-human user (boo!), or (c) use their not-yet-ready for primetime HTTP OAUTH deploy access, which can't create read-only permissions.

In the past to managee the multiple keys I've either (a) used ssh-agent or (b) specified which private key to use for each request using -i on the command line, but neither of those are convenient with Jenkins.

Today I finally thought about it a little harder and figured out I could use fake hostnames that map to the right keys within the .ssh/config file for the Jenkins user. To make it work I put stanzas like this in the config file:

Host repo_name.github.com

Hostname github.com

HostKeyAlias github.com

IdentityFile ~jenkins/keys/repo_name_deploy

Then in the Jenkins GitHub Plugin config I set the repository URL as:

git@repo_name.github.com:ry4an/repo_name.git

There is no host repo_name.github.com and it wouldn't resolve in DNS, but that's okay because the .ssh/config tells ssh to actually go to github.com, but we do get the right key.

Maybe this is obvious and everyone's doing it, but I found it the least-hassle way to maintain the accounts-for-people-only rule along with the separate-keys-for-separate-repos rule and without running the ssh-agent daemon for a non-login shell.

spdyproxy on Ubuntu 12.4 LTS

I'm often on unencrypted wireless networks, and I don't always trust everyone on the encrypted ones, so I routinely run a SOCKS proxy to tunnel my web traffic through an encrypted SSH tunnel. This works great, but I have to start the SSH tunnel before I start browsing -- that's okay IRC before reader -- but when I sleep the laptop the SSH tunnel dies and requires a restart before I can browse again. In the past I've used autossh to automate that reconnect, but it still requires more attention than it deserves.

So I was excited when I saw Ilya Grigorik's writeup on spdyproxy. With SPDY multiple independent bi-directional connections are multiplexed through a single TCP connection, in the same way some of us tried to do with web-mux in the late 90s (I've got a Java 1.1 implementation somwhere). SPDY connections can be encrypted, so when making a SPDY connection to a HTTP proxy you're getting an encrypted tunnel though which your HTTP connections to anywhere can be tunneled, and probably getting a TCP setup/teardown speed boost as well. Ilya's excellent node-spdyproxy handles the server side of that setup admirably and the rest of this writeup covers my getting it running (with production accouterments) on an Ubuntu 12.4(.1) LTS system.

With the below setup in place I can use a proxy.pac to make sure my browser never sends an unencrypted byte through whatever network I'm not -- DNS looksups excluded, you still needs SOCKSv4a or SOCKSv5 to hide those.

Android Fragmentation Analogy

This whole post should just be a single tweet, but somehow it doesn't feel safe to say anything comparing Android and iOS development, even implicitly, without five paragraphs full of disclaimers. First, here's the tweet:

Developers complaining about fragmentation on the Android platform are like fashion designers complaining about size fragmentation on the human body.

Expanding on that I'd say, yes, it's easier to make something that fits beautifully if you know the exact size and shape you're targeting and don't have to think about how it will look on other form factors / body shapes. Some designers create clothes for only the fashion model body shape, and some create clothes that fit a wider variety of body shapes.

An average Android application that doesn't do anything particularly out of the norm visually and avoids undocumented behaviors, does just fine on any device at its target version or later. It might not be visually stunning, but it's worth noting that "just fine" is always available, and plenty of great apps have taken it.

On the other hand, developers that reach for visual excellence on the Android platform do have a hard row to hoe, in the same way that making clothing that's flattering on all body shapes is very hard. Things have to be extensively tweaked and tested. If you're building Glipboard or a game engine on Android, then I totally get that the variety of resolutions, form factors, and input options makes your job harder.

To be clear, I'm not drawing any analogies between iOS devices and fashion models excepting the uniformity of shape/form factor, and I'm not saying anything particularly negative about designers that stop at size two or developers that only do iOS.

I'm just saying that Android fragmentation isn't inherently a problem, and that while it makes stunningly beautiful applications harder, it doesn't make the basic "getting stuff done" application that represents 99% of both the iOS and Android app stores any harder. Most iOS and Android apps are just using the usual controls in the usual way, and wouldn't be affected by Android fragmentation in any meaningful way.

OS X Linux Clipboard Sharing

My primary home machine is a Linux deskop, and my primary work machine is an OSX laptop. I do most of my work on the Linux box, ssh-ed into the OS X machine -- I recognize that's the reverse of usual setups, but I love the awesome window manager and the copy-on-select X Window selection scheme.

My frustration is in having separate copy and paste buffers across the two systems. If I select something in a work email, I often want to paste it into the Linux machine. Similarly if I copy an error from a Linux console I need to paste it into a work email.

There are a lot of ways to unify clipboards across machines, but they're all either full-scale mouse and keyboard sharing, single-platform, or GUI tools.

Finding the excellent xsel tool, I cooked up some command lines that would let me shuttle strings between the Linux selection buffer and the OS X system via ssh.

I put them into the Lua script that is the shortcut configuration for awesome and now I can move selections back and forth. I also added some shortcuts for moving text between the Linux selection (copy-on-select) and clipboard (copy-on-keypress) clipboard.

-- Used to shuttle selection to/from mac clipboard

select_to_mac = "bash -c '/usr/bin/xsel --output | ssh mac pbcopy'"

mac_to_select = "bash -c 'ssh mac pbpaste | /usr/bin/xsel --input'"

-- Used to shuttle between selection and clipboard

select_to_clip = "bash -c '/usr/bin/xsel --output | /usr/bin/xsel --input --clipboard'"

clip_to_select = "bash -c '/usr/bin/xsel --output --clipboard | /usr/bin/xsel --input'"

awful.key({ modkey, }, "c", function () awful.util.spawn(mac_to_select) end),

awful.key({ modkey, }, "v", function () awful.util.spawn(select_to_mac) end),

awful.key({ modkey, "Shift" }, "c", function () awful.util.spawn(clip_to_select) end),

awful.key({ modkey, "Shift" }, "v", function () awful.util.spawn(select_to_clip) end),

Asynchronous Python Logging

The Python logging module has some nice built-in LogHandlers that do network IO, but I couldn't square with having HTTP POSTs and SMTP sends in web response threads. I didn't find an asynchronous logging wrapper, so I wrote a decorator of sorts using the really nifty monkey patching availble in python:

def patchAsyncEmit(handler):

base_emit = handler.emit

queue = Queue.Queue()

def loop():

while True:

record = queue.get(True) # blocks

try :

base_emit(record)

except: # not much you can do when your logger is broken

print sys.exc_info(

thread = threading.Thread(target=loop)

thread.daemon = True

thread.start(

def asyncEmit(record):

queue.put(record)

handler.emit = asyncEmit

return handler

In a more traditional OO language I'd do that with extension or a dynamic proxy, and in Scala I'd do it as a trait, but this saved me having to write delegates for all the other methods in LogHandler.

Did I miss this in the standard logging stuff, does everyone roll their own, or is everyone else okay doing remote logging in a web thread?

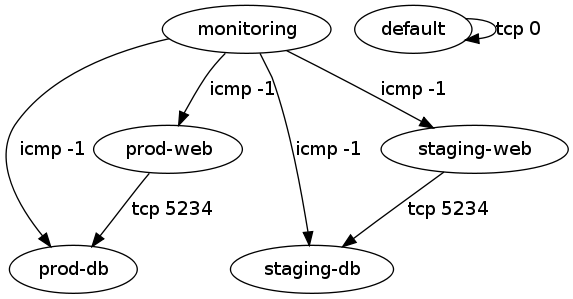

A Few Quick EC2 Security Group Migration Tools

Like half the internet I'm working on duplicating a setup from one Amazon EC2 availability zone to another. I couldn't find a quick way to do that if my stuff wasn't already described in Cloud Formation templates, so I put together a script that queries the security groups using ec2-describe-group and produces a shell script that re-creates them in a different region.

If all your ec2 command line tools and environment variables are set you can mirror us-east-1 to us-west-1 using:

ec2-describe-group | ./create-firewall-script.pl > create-firewall.sh ./create-firewall.sh

With non-demo security group data I ran into some group-to-group access grants whose purpose wasn't immediately obvious, so I put together a second script using graphviz to show the ALLOWs. A directed edge can be read as "can access".

That script can also be invoked as:

ec2-describe-group | ./visualize-security-groups.pl > groups.png

The labels on the edges can be made more detailed, but having each of tcp, udp, and icmp shown started to get excessive.

Both scripts and sample input and output are in the provided tarball.

reStructuredText Resume

I've had a resume in active maintenance since the mid 90s, and it's gone through many iterations. I started with a Word document (I didn't know any better). In the late 90s I moved to parallel Word, text, and HTML versions, all maintained separately, which drifted out of sync horribly. In 2010 I redid it in Google Docs using a template I found whose HTML hinted at a previous life in Word for OS X. That template had all sorts of class and style stuff in it that Google Docs couldn't actually edit/create, so I was back to hand-editing HTML and then using Google Docs to create a PDF version. I still had to keep the text version current separately, but at least I'd decided I didn't want any job that wanted a Word version.

When I decided to finally add my current job to my resume, even months after starting, I went for another overhaul. The goal was to have a single input file whose output provided HTML, text, and PDF representations. As I saw it that made the options: LaTeX, reStructuredText, or HTML.

I started down the road with LaTeX, and found some great templates, articles, and prior examples, but it felt like I was fighting with the tool to get acceptable output, and nothing was coming together on the plain text renderer front.

Next I turned to reStructuredText, and found it yielded a workable system. I started with Guillaume ChéreAu's blog post and template and used the regular docutils tool rst2html to generate the HTML versions. The normal route for turning reStructuredText into PDF using doctools passes through LaTeX, but I didn't want to go that route, so I used rst2pdf, which gets there directly. I counted the reStructuredText version as close-enough to text for that format.

Since now I was dealing entirely with a source file that compiled to generated outputs it only made sense to use a Makefile and keep everything in a Mercurial repository. That gives me the ability to easily track changes and to merge across multiple versions (different objectives) should the need arise. With the Makefile and Mercurial in place I was able to add an automated version string/link to the resume so I can tell from a print out which version someone lis looking at. Since I've always used a source control repository for the HTML version it's possible to compare revisions back to 2001, which get pretty silly.

I'm also proud to say that the URL for my resume hasn't changed since 1996, so any printed version ever includes a link to the most current one. Here are links to each of those formats: HTML, PDF, text, and repository, where the text version is the one from which the others are created.

Automatic SSH Tunnel Home As Securely As I Can

After watching a video from Defcon 18 and seeing a tweet from Steve Losh I decided to finally set up an automatic SSH tunnel from my home server to my traveling machines. The idea being that if I leave the machine somewhere or it's taken I can get in remotely and wipe it or take photos with the camera. There are plenty of commercial software packages that will do something like this for Windows, Mac, and Linux, and the highly-regarded, open-source prey, but they all either rely on 3rd party service or have a lot more than a simple back-tunnel.

I was able to cobble together an automatic back-connect from laptop to server using standard tools and a lot of careful configuration. Here's my write up, mostly so I can do it again the next time I get a new laptop.

BoingBoing Posts in Rogue

Previously I mentioned I was importing the full corpus of BoingBoing posts into MonogoDB, which went off without a hitch. The import was just to provide a decent dataset for trying out Rogue, the Mongo searching DSL from the folks at Foursquare. Last weekend I was in New York for the Northeast Scala Symposium and the Foursquare Hackathon, so I took the opportunity finish up the query part while I had their developers around to answer questions.

Loading BoingBoing into MongoDB with Scala

I want to play around with Rogue by the Foursquare folks, but first I needed a decent sized collections of items in a MongoDB. I recalled that BoingBoing had just released all their posts in a single file, so I downloaded that and put together a little Scala to convert from XML to JSON. The built-in XML support in Scala and the excellent lift-json DSL turned the whole thing into no work at all:

Traffic Analysis In Perl and Scala

I needed to implement the algorithm in Practical Traffic Analysis Extending and Resisting Statistical Disclosure in a hurry, so I turned to my old friend Perl. Later, when time permitted I re-did it in my new favorite language, Scala. Here's a quick look at how a few different pieces of the implementation differed in the two languages -- and really how idiomatic Perl and idiomatic Scala can look pretty similar when one gets past syntax.

Syntax Highlighting and Formulas for Blohg

I'm thus far thrilled with blohg as a blogging platform. I've got a large post I'm finishing up now with quite a few snippets of source code in two different programming languages. I was hoping to use the excellent SyntaxHighlighter javascript library to prettify those snippets, and was surprised to find that docutils reStructuredText doesn't yet do that (though some other implementations do).

Fortunately, adding new rendering directives to reStructuredText is incredibly easy. I was able to add support for a .. code mode with just this little bit of Python:

Blacklisting Changesets in Mercurial

Distributed version control systems have revolutionized how software teams work, by making merges no longer scary. Developers can work on a feature in relative isolation, pulling in new changes on their schedule, and providing results back on their (manager's) timeline.

Sometimes, however, a developer working in their own branch can do something really silly, like commit a huge file without realizing it. Only after they push to the central repository does the giant size of the changeset become known. If one catches it quickly, one just removes the changeset and all is will.

If other developers have pulled that giant changeset you're in a slightly harder spot. You can remote it from your repository and ask other developers to do the same, but you can't force them to do so. Unwanted changesets let loose in a development group have a way of getting pushed back into a shared repository again and again.

To ban the pushing of a specific changeset to a Mercurial repository one can use this terse hook in the repository's .hg/hgrc file:

[hooks] pretxnchangegroup.ban1 = ! hg id -r d2cfe91d2837+ /dev/null 2>&1

Where d2cfe91d2837 is the node id of the forbidden changeset.

That's fine for a single changeset, but if you more than a few to ban this form avoids having a hook per changeset:

[hooks]

pretxnchangegroup.ban = ! hg log --template '{node|short}\n' \

-r $HG_NODE:tip | grep -q -x -F -f /path/to/banned

where banned /path/to/banned is a file of disallowed changesets like:

acd69df118ab 417f3c27983b cc4e13c92dfa 6747d4a5c45d

It's probably prohibitively hard to ban changesets in everyone's repositories, but at least you can set up a filter on shared repositories and publicly shame anyone who pushes them.

Switching Blogging Software

This blog started out called the unblog back when blog was a new-ish term and I thought it was silly. I'd been on mailing lists like fork and Kragan Sitaker's tol for years and couldn't see a difference between those and blogs. I set up some mailing list archive software to look like a blog and called it a day.

Years later that platform was aging, and wikis were still a new and exciting concept, so I built a blog around a wiki. The ease of online editing was nice, though readers never took to wiki-as-comments like I hoped. It worked well enough for a good many years, but I kept having a hard time finding my own posts in Google. Various SEO-blocking strategies Google employs that I hope never to have to understand were pushing my entries below total crap.

Now, I've switched to blohg as a blogging platform. It's based on Mercurial my version control system of choice and has a great local-test and push to publish setup. It uses ReStructured-Text which is what wiki text became and reads great as source or renders to HTML. Thanks to Rafael Martins for the great software, templates, and help.

The hardest part of the whole setup was keeping every URL I've ever used internally for this blog still valid. URLs that "go dead" are a huge pet peeve of mine. Major, should-know-better sites do this all the time. The new web team brings up brand new site, and every URL you'd bookmarked either goes to a 404 page or to the main page. URLs are supposed to be durable, and while it's sometimes a lot of work to keep that promise it's worth it.

In migrating this site I took a couple of steps to make sure URLs stayed valid. I wrote a quick script to go through the HTTP access logs site for the last few months, looked for every URL that got a non-404 response, and turned them into web requests and made sure that I had all the redirects in place to make sure the old URLs yielded the same content on the staging site. I did the same essential procedure when I switched from mailing list to wiki so I had to re-aim all those redirects too. Finally, I ran a web spider against the staging site to make sure it had no broken internal links. Which is all to say, if you're careful you can totally redo your site without breaking people's bookmarks and search results -- please let me know if you find a broken one.

Mercurial Remote Test Runner via Push

I heard someone in IRC saying that the mercurial test suite was bogging down theirlaptop, so I set up a quick push-test service for the mercurial crew. If you're in crew and you do a push to ssh://hgtester@ry4an.org:2222/ these steps will be taken:

- a local clone of the crew repo is updated from intevention.de

- a new, disposable local clone is created from that crew clone

- your csets are pushed to that new clone

- the working directory is updated to 'tip'

- a build is done

- the test suite is run

- the build and results show up in your stdout

- the new clone (and your pushed csets) are deleted

It's on a reasonably fast, unloaded box so the test suite runs in about 3 mins 30 seconds. Thanks to ThomasAH for providing the crew pubkeys. If you're not in crew and want to use the service please contact me and convince me you're not going to write a test that does a "rm -rf ~", because that would completely work.

Unfortunately, the output is getting buffered somewhere so there's no output after "searching for changes" for almost 4 minutes, but the final output looks as attached.

The machine's RSA host key fingerprint is: ac:81:ac:0b:47:f4:20:a1:4d:7e:6a:c5:62:ba:62:be. (updated 2010/06/07)

The scripts can be viewed here: http://bitbucket.org/Ry4an/hgtester/

If all that was jibberish, we now return you to your regularly scheduled silence.

Remote Repository Creation for Mercurial Over HTTP

I park in the #mercurial IRC channel a lot to answer the easy questions, and on that comes up often is, "How can I create a remote repository over HTTP?". The answer is: "You can't.".

Mercurial allows you to create a repository remotely using ssh with a command line like this:

hg clone localrepo ssh://host//abs/path

but there's no way to do that over HTTP using either hg serve or hgweb behind Apache.

I kept telling people it would be a very easy CGI to write, so a few months back I put my time where my mouth was and did it.:

#!/bin/sh echo -n -e "Content-Type: text/plain\n\n" mkdir -p /my/repos/$PATH_INFO cd /my/repos/$PATH_INFO hg init

That gets saved in unsafecreate.cgi and pointed to by Apache like this:

ScriptAlias /unsafecreate /path/to/unsafecreate.cgi

and you can remotely invoke it like this:

http://your-poorly-admined-host.com/unsafecreate/path/to/new/repo

That's littered with warning about its lack of safety and bad administrative practices because you're pretty much begging for someone to do this:

http://your-poorly-admined-host.com/unsafecreate/something%3Brm%20-rf%20

Which is not going to be pretty, but on a LAN maybe it's a risk you can live with. Me? I just use ssh.

At the time I first suggested this someone chimed in with a cleaned up version in the original pastie, but it's no safer.

Grand Central Direct Dialer

I'm a huge fan of Grand Central's call screening features. It's irksome, however, that they make it hard to dial outward -- sending your GC number instead of your cell number as the caller id. To do so you need to first add the target number to your address book, and often I'm calling someone I don't intend to call again often.

I started scripting up a way around that when I saw someone named Stewart already had.

I wanted to be able to easily dial outbound from my cellphone, so I created a mobile friendly web form around his script. The script requires info you should never give out (username, password, etc.), so you should really download the script and run it on your own webserver.

It also generates a bookmarklet you can drag to your browser's toolbar that will automatically dial any selected/highlighted phone number from your GC Number.

Comments

Only to save someone else the time: The iPhone app, Grand Dialer, does the same thing from an iPhone. Everyone says it's excellent.

Autobahn Accelerator for iTunes

My company, Swarmcast, announced one of our first public releases today. Previously we've been primary selling to content providers, but now we're putting out a user facing free release. If you download our Autobahn Accelerator for iTunes you'll find your purchases from the iTunes music store come down three to ten times faster than they did before. We'll be adding support for lots of other sites (you tube, etc.) in upcoming weeks.

Sadly we've got a MacOS version done, but the installation was deemed too clumsy for the polished Mac experience, so we'll have to wait a few weeks to get that out. Windows only for now (says this Linux user).

View Any Simon Delivers Order

I forwarded a Simon Delivers order receipt email on to a friend, and he was able to view the order without being logged in as me. Turns out that if you have a Simon Delivers account at all they let you view any order. I created a quick web form to let anyone view any order using my account. Here's my favorite order so far:

| Qty | Item Name | Each |

| 1 | Cetaphil Moisturizing Lotion | $10.99 |

| 2 | Coke Diet - 24/12 oz. Cans | $7.49 |

| 2 | Dr Pepper Diet - 24/12 oz. Cans | $7.49 |

| 2 | Hershey's Milk Chocolate Candy Bars - 6 ct. | $3.49 |

| 2 | Life Savers Wintergreen Flavored - Individually Wrapped - Bag | $1.89 |

| 1 | Nabisco Nutter Butter Peanut Butter Sandwich Cookies | $3.79 |

| 1 | Nestea Cool Lemon Iced Tea Fridge Pack - 12/12 oz. Cans | $4.19 |

| 2 | Pepsi - 24/12 oz. Cans | $7.49 |

| 2 | Pepsi 8/12 oz. Bottles | $3.69 |

| 1 | Seven-Up - 12/12 oz. Cans | $4.19 |

| 2 | Seven-Up Diet - 12/12 oz. Cans | $4.19 |

I'm sure fixing this problem is simple as adding whatever the .asp equivalent of this is:

if (currentUser != order.user) {

return;

}

Funny, though.

If you try you own and stumble across any funny ones put the order number in the comments.

Comments

3885593 - Five boxes of cereal and two gallons of milk. -- Nick

2566520 - 15 gallons of bottled water, Milk Bones, and an issue of Minnesota Parent magazine. -- Dan

The Wedding Planned With Bugzilla

If things have been a little sparse around here over the last year or so it's because outside of work the bulk of my organizational and creative energies have been going into the planning of our wedding.

The wedding was this weekend, and everything was spectacular. Photos and details can be found on the wedding website.

I've come away from the wedding planning experience with this advice for guys: Don't bother helping; no one but your finance/wife will believe you've done anything, and she's already in love with you.

Kate and I got no end of comments and jokes predicated on the notion that the guy never does anything to help with the wedding, and despite her earnest protestations to the contrary, you could tell that people came away with a belief that at most I probably helped pick the cake or something.

That assumption was all the more maddening because, in fact, my tendency to over plan events was perfect for a wedding. I'd been waiting for just this sort of opportunity to plan a large event and in doing so to put a record keeping theory to the test. -- By now it should be obvious that Kate, my wife, is a very patient woman.

For years I'd watched an event planner who worked out of the same coffee shop I did practice her trade. So nearly as I could tell she lived entirely in a world of post-it notes and phone calls. On any given day I'd watch 500 different pieces of information flit before her mental windshield with no discernible organizational system I could recognize. It drove me crazy. I wanted to offer to help her come up with a computer based solution that would patch all the holes in her process I was sure had to plague her on every project.

Meanwhile, I was sitting next to her working on computer software, which for any project of reasonable size includes tracking thousands of details. Among those details are defects, bugs, and any team with any hope of success uses a bug tracker system to keep them documented. The most popular, but certainly not the most user-friendly, bug tracker is Bugzilla. I like it a great deal.

I became certain that more than a spreadsheet or calendar or MS project, event planning required a bug tracker. I was pretty sure that Bugzilla could be put to work to keep good logs of tasks, dependences, and details in exactly the right fashion.

As alluded to previously, Bugzilla has a user-interface that only a software developer could love. Kate's not a software developer, so there was some initial resistance, but she's a trouper and took to it eventually. File attachments held contracts, and comments included phone logs. We were planning the wedding long distance so most communications were electronic.

In the end it worked well -- no details fell through the cracks --, but it was probably overkill for a two-person project. Something like basecamp is probably a much better fit. Bugzilla does have some nearly useless charts that allowed me to produce the horrible dependency graph below:

Display Google Calendars with PHP iCalendar

Google has a new calendar service, and it's great. I really try to avoid hosted data solutions, but this one's just too good to pass up. My one gripe is that there's no easy way for non google calendar users to view the calendars. They're available live as both ical and rss/xml files, but the average home web user doesn't know what to do with either of those.

There are plenty of services out there that will display an ical file as a web page, but none of them I tested rendered the google ical output well, and all of them were packed with ads. Previously, I'd used software called phpicalendar to display ical files created by my old calendaring solution on the web, so I started there. It didn't parse the google output well either. However, with a little tweaking (see the patch in the zip file below) and some Apache trickery (see the README in the zip file) I can now get good phpicalendar output from google.

google-calendar-phpicalendar-2.22.zip

Update: Looks like now google offers a good way to do this.

Comments

Hey man I really want to get my php iCalendar working with my new Google Calendar as you have, but my server is not a Linux box, so I don't have a good way to patch the diff file you included in the zip. I was wondering if you would be willing to upload the actual files that you changed, or would you be willing to email them to me. I would really appreciate it.

Hrm, not to be unhelpful, but if you read the patch file you'll see I just commented out one block and added a simple if test somewhere else. It should be very easy to do by hand on the two files. The unified diff format is nice in that it's quite human readable despite being ready for machine processing. -- Ry4an

I'm not familiar with php icalendar, but (stupid question...) if using your work around, and I keep making new events in the google calendar, will they show up in the icalendar, or will some kind of cron job be required? (maybe I should just use the icalendar... but the google site is so seductive....) --Rebecca, cookieshouse.com

Yes, my phpicalendar hack does a live display of the google data. One could use phpicalendar all by itself, but I like the invites, access controls, and UI from google calendar well enough that I though it was worth trying to have phpicalendar do a live display of data I keep in google calendar. -- Ry4an

I was all excited to work on this little project. Then I realized I don't have the ability to apply patches (or if I do, I haven't a clue how to). Thanks for sharing though, it looks super cool on your site! --Rebecca, cookieshouse.com

I could not get your method to work so I had to rework the ical_parser.php. I recreated the $cal_filelist array with my google calendar urls. Then so the names of the calendars were not "basic" I created another array called $cal_names. Here maybe some source code will make this more clear. At about line 102 of ical_parser.php

$cal_filelist = array ("http://calendar url 1", "http://calendar url 2");

$cal_names = array ("Calendar Name 1","Calendar Name 2");

$counter = 0;

foreach ($cal_filelist as $cal_key=>$filename) {

// Find the real name of the calendar.

//$actual_calname = getCalendarName($filename); original code commented out

$actual_calname = $cal_names[$counter];

$counter++;

}

-- Psycho Whale

That's a more general solution than my quick hack. You might want to submit your code changes back to the phpicalendar project using their patch tracker. I'm sure they're getting all sorts of "support google calendar" requests and yours is a good step toward that. -- Ry4an

I applied your patch... no problem. But when I did the .htaccess edit, it would not redirect the .ics file to the google calendar. I then edited the config.inc.php to allow webcal's and added the exact patch of the .ics (which would be redirected) in the "$list_webcals[] = *;" area. No dice. It would then give me an error (which is strange since the file actually existed in that spot). Any idea what I'm doing wrong? Do I need to edit the "$default_path" in the config.inc.php to show the patch to the redirected .ics file also? I'd love to get this thing to work but doesn't seem to be happening. Tried Pycho Whale's solution also but that worked even less. Not sure if he was editing the ical_parser.php before or after your patch or if that even was relevent. Lot's a questions. Any help? -- RSmith423

If the .htacces file is ignoring your Redirect line it's because your httpd.conf file isn't set to allow Redirect lines in .htaccess files. You can either edit httpd.conf to allow Redirect lines in .htaccess files or you can just put the Redirect line directly into the httpd.conf file. Instructions for both can be found in the Apache online help. -- Ry4an

I noticed that recurring events don't display correctly. If you have a recurring event the start time and end time is always the same.

Here is my hack to PHP iCalendar to make it work:

in ical_parser.php:

my code:

ereg('^PT([0-9]+)S', $data, $duration);

$the_duration = $duration[1];

replaces this original code:

ereg ('^P([0-9]{1,2}[W])?([0-9]{1,2}[D])?([T]{0,1})?([0-9]{1,2}[H])?\([0-9]{1,2}[M])?([0-9]{1,2}[S])?', $data, $duration);

$weeks = str_replace('W', '', $duration[1]);

$days = str_replace('D', '', $duration[2]);

$hours = str_replace('H', '', $duration[4]);

$minutes = str_replace('M', '', $duration[5]);

$seconds = str_replace('S', '', $duration[6]);

$the_duration = ($weeks * 60 * 60 * 24 * 7) + ($days * 60 * 60 * 2\4) + ($hours * 60 * 60) + ($minutes * 60) + ($seconds);

Apparently Google uses seconds to specify the duration of the event, but PHP iCalendar expects the duration in hour minute second format.

Thanks for the patch!

-Charles

The only thing I had to do to get GoogleCalendar to work was the following:

phpicalendar/config.inc.php: $allow_webcals = 'yes'; phpicalendar/config.inc.php: $timezone = 'Europe/Paris'; php.ini: allow_url_fopen = On

And it worked right out of the box ...*

http:// YOUR-SITE /phpicalendar/month.php?cal=http://www.google.com/calendar/ical/ YOUR-GMAIL /public/basic&getdate=20060518

Thomas.

Excellent, maybe they've updated. I kept having it refuse to display any webcal URL that didn't end in '.ics', pehaps that's been fixed. Also, I found I needed to add some link text to the blank free/busy view entries for them to be clickable, but that would only be required if you use the free/busy (rather than full detail) view gcalendar provides. --* Ry4an

Improving Nick Tracking using String Similarity

Years back I wrote an IRC nick tracking script. It's served me well since then, but it has one major annoyance. When people changed their name slightly it would remember that name change, even though the old/new mapping didn't contain any real identity change information.

For example, when Gabe_ became Gabe it would display every message from him as <Gabe_(Gabe)>. That doesn't tell me anything interesting about who Gabe is.

I decided to tweak the tracker to ignore small changes in names. Computers don't think in terms like small they need a way to quantify difference and then see if it exceeds a specified threshold. Fortunately, lots of people have worked on just that problem -- mostly so that spell checkers can present you with a list that's close to the non-word you typed.

When I've worked with close enough strings in the past I've used the Levenshtein_distance as implemented in the String::Approx module or the ancient Soundex algorithm. This time, however, I tried out the String::Trigram module as written by Tarek Ahmed, which implements the method proposed by Angell in this paper. Here's an explanation from String::Trigram's README file:

This consists of splitting some string into triples of

characters and comparing those to the trigrams of some other string. For

example the string kangaroo has the trigrams "{kan ang nga gar aro

roo}". A wrongly typed kanagaroo has the trigrams "{kan ana nag aga gar

aro roo}". To compute the similarity we divide the number of matching

trigrams (tokens not types) by the number of all trigrams (types not

tokens). For our example this means dividing 4 / 9 resulting in 0.44.

Thus far, at a 50% match threshold it's never failed to detect a real change or ignore a minor-change, and if it does I should just be able to notch the match-threshold higher or lower. Great stuff.

The modified script can be viewed here and downloaded here.

Comments

If you wanted to only track nick changes in certain channels you'd add code line this at line 86:

return unless grep /^$chan$/, qw(#channelone #channeltwo #channel3);

I've modified 1.1 with a new /function, trackchan, that allows one to manage a list of channels where they want nick tracking to take place. If the list is empty, tracking will be done in all channels. The following is a unified diff.

What it doesn't do:

- Check to make sure that the channel you're passing in actually conforms to any standard channel naming conventions.

- Check to see if the channel already exists in the list before trying to remove it (though thanks to it just being a simple grep, no errors is returned in any case).

- Check to see if you're adding a duplicate channel to the list (feel free, it doesn't affect the functionality one bit).

- Have an option for printing the channel list. I think I will modify it to just print the channel list in addition to the usage if /trackchan is called with no arguments.

-- Gabe

--- nick-track.pl.orig Thu Dec 22 10:37:34 2005

+++ nick-track.pl.trackchan Thu Dec 22 14:50:30 2005

@@ -22,7 +22,7 @@

use Irssi;

use strict;

use String::Trigram;

-use vars qw($VERSION %IRSSI %MAP);

+use vars qw($VERSION %IRSSI %MAP @CHANNELS);

$VERSION = "1.1";

%IRSSI = (

@@ -47,6 +47,7 @@

'Asrael' => 'Sammi',

'Cordelia' => 'Sammi',

);

+@CHANNELS = qw();

sub call_cmd {

my ($data, $server, $witem) = @_;

@@ -84,6 +85,13 @@

my ($chan, $nick_rec, $old_nick) = @_;

my $nick = $nick_rec->{'nick'};

+ # If channel list is empty, track for all channels.

+ # If channel list is non-empty, track only for channels in list.

+ my $channels = @CHANNELS;

+ if ($channels > 0) {

+ return unless grep /^$chan$/, @CHANNELS;

+ }

+

if (defined $MAP{$old_nick}) { # if a previous mappings exists

if (String::Trigram::compare($nick, $MAP{$old_nick},

warp => 1.8,

@@ -101,6 +109,34 @@

}

}

}

+

+sub trackchan_cmd {

+ my ($data, $server, $witem) = @_;

+ my ($cmd, $channel) = split ' ', $data;

+ my @cmds = qw(add del);

+

+ unless (defined $cmd && defined $channel && map($cmd, @cmds)) {

+ print "Usage: /trackchan [add|del] #channel";

+ return;

+ }

+

+ if ($cmd eq 'add') {

+ push @CHANNELS, $channel;

+ print "$channel added to channel list";

+ }

+

+ if ($cmd eq 'del') {

+ @CHANNELS = grep(!/^$channel$/, @CHANNELS);

+ print "$channel removed from channel list";

+ }

+

+ print "Current channel list:";

+ foreach my $channel (@CHANNELS) {

+ print " $channel";

+ }

+}

+

+Irssi::command_bind trackchan => \&trackchan_cmd;

Irssi::signal_add("message public", \&rewrite);

Irssi::signal_add("nicklist changed", \&nick_change);

Thanks, Dopp, great stuff! -- Ry4an

Linux on the Dell X1

Yesterday I got the warranty replacement machine for my (company's) Dell X300 laptop. Dell mailed me an X1, which seems a nice enough machine. It meets my firm criteria: under 3 lbs and thinner than an inch. If Apple would hit those numbers I'd be there in a second.

Unfortunately, it looks like getting Linux on to this thing is going to be a pain. Emperor Linux will sell an X1 with Linux pre-installed, but they want $450 to take the X1 I already "own" and put Linux on to it. If they're not able to simply mirror a debugged installation over, that says a lot about their volume. I value my time pretty highly, but $450 for a software install seems extreme.

Fortunately there are plenty of pages detailing how to get Linux running on the X1. I'll muddle through the process and attach my notes as comments.

Comments

I've found that to boot Knoppix using the external optical drive I need to use this boot time invocation:

knoppix fromhd=/dev/uba

Now to try qtparted to squish down the NTFS windows partition to something reasonable.

Using ntfsresize and fdisk I was able to squish the windows install down to 10GiB. Now I'm just waiting for my fedora core 4 DVD to arrive for install.

Fedora Core 4 installed from the DVD without a hitch. I had to download the ipw2200 firmware RPM to make the wireless work. The 855resolution utility as invoked from rc.local and tweaked in xorg.conf got the resolution notched up. Next up... ion.

Email Sub-Address Spam Frequency

My email server is configured such that email to ry4an-anything@ry4an.org gets correctly delivered to me. The dash and whatever is after it are retained but ignored completely.

When I give an email address to a company, say Northwest Airlines,I'll give them an email address that shows to whom it was given, say ry4an``-nwa``@ry4an.org. By doing this I'm able to check which companies are giving/selling/leaking my email address to spammers. Some of the leaks are surprising -- just a few weeks after giving out ry4an-philmont for the first time, giving it to the Boy Scouts, I started getting porn spam on it. When I called to let them know about the leak they assured me it was impossible.

Last month I decided to save all of my inbound spam and run some totals to see which sub-addresses got the most spam. Here are the counts:

- 6427 total spam messages to ry4an.org in 34 days

- 679 spam messages to plain ry4an*@*ry4an.org

- the 10 most spammed sub-addresses were

| Received | Address | Given to |

| 2542 | ry4an-slashdot | Posted to http://slashdot.org |

| 252 | ry4an-dip | Used in the Diplomacy community |

| 159 | ry4an-resume | On my resume |

| 141 | ry4an-yahoo | Given to yahoo.com |

| 125 | ry4an-cnet | Byline for some articles I wrote |

| 98 | ry4an-oldenburg | Defunct Oldenburg project |

| 88 | ry4an-poker | Used at https://ry4an.org/poker/ |

| 84 | ry4an-tclug | Given to the Twin Cities Linux Users' Group |

| 62 | ry4an-dns | Used for all my domain registrations |

| 44 | ry4an-keysigning | Posted at https://ry4an.org/keysigning/ |

So it looks like the worst offenders aren't comanies to whom I've given my email address, but rather letting them get posted to the internet for automated crawlers to harvest.

Gmail users: You can do the same thing using the plus sign.

Comments

Yeah, the one I gave to United actually garners me the most spam. I emailed them to complain but was brushed off relatively quickly. -- Anonymous

Using Mutt to Automate Mailman Message Rejections

Since this post I've upgraded my mailman installation to a newer version, which allows me to automatically reject messages from non-subscribers without having to resort to external scripting.

However, some of the mailing lists I run are subscribed to by a significant number of members who can't be counted on to post from the email address with which they subscribed, or indeed to even understand what that means. For those lists a policy that automatically rejects messages from non-members is just too draconion. Unfortunately, that means the few spam messages a day from non-members which make it past my filters but would normally be automatically rejected due to their non-member origins have be manually discarded so that I can approve the few non-member messages per month that really do belong on the list.

Relying on mailman's email control interface (as differentiated from its web control interface) I was able to craft the following mutt macro to make the rejecting of undesirable non-member messages a single keystroke affair:

macro index X ":set editor=touch^Mv/confirm^Mryqd:set editor=\"vim -c 'set nocindent' -c 'set textwidth=72' -c '/^$/+1' -c 'nohlsearch'\"^M"

When the 'X' key is pressed the message editor is set to the UNIX 'touch' command which represents absolute minimal message editing. The rest of the macro replies to the confirmation subpart of the mailman message, which indicates rejection to mailman's email control interface. After the reply is sent the message editor is set back to it's usual value (vim).

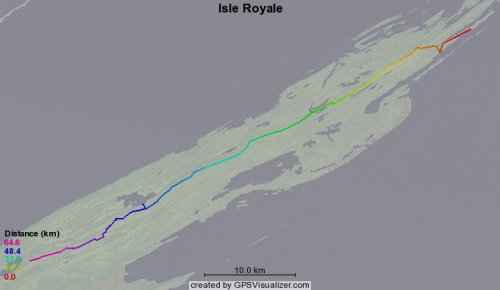

Isle Royal GPS Data

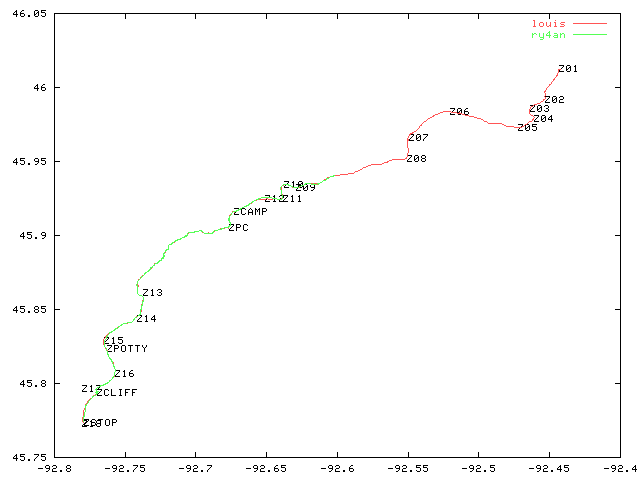

Earlier this month some friends and I hiked across Isle Royale in lake Superior. Joe kept his GPS running and produced good track points in an odd export format from his Mac software called "Topo". I created a quick conversion script to produce this GPS format data which can be used with the GPS visualizer website to produce images like this:

CVS Commit Blocking

When editing source files checked out from CVS I sometimes want to prevent them from going back in to source control without further edits. Until now I've just used // FIXME comments and have tried to remember to grep for FIXMEs before committing the files back.

Problem is others use FIXME comments, and sometimes I forget to grep. So I've tweaked our CVSROOT files to prevent custom FIXME tags from going in to source control.

I appended this line to CVSROOT/commitinfo:

ALL $CVSROOT/CVSROOT/checkforry4an

Added this line to CVSROOT/checkoutlist:

checkforry4an Tell Ry4an he broke something with checkforry4an

and crated a file named CVSROOT/checkforry4an containing:

#!/bin/sh

BASE=$1

shift

for thefile in "$@" ; do

if echo $thefile | grep -v Foreign | grep -q '.java$' ; then

if grep -s -q 'FIXME RY4AN' $thefile ; then

echo found a FIXME RY4AN in $thefile

exit 1

fi

fi

done

Now when I put a // FIXME RY4AN comment into a source file commits break until I remove it.

Garble To GPX Track Conversion

For years I've been using garble to pull track and way point data off of my Garmin eTrex GPS. Unfortunately it produces data in a completely non-standard format. In the past I've written a little custom software to turn the garble data into maps.

Now I'm using http://gpsvisualizer.com to produce much nicer maps, but it takes data in the superior GPX format. The GPSBabel software will pull way point data off of Garmin GPSs and puts them into GPX, but it doesn't handle tracks.

So, I needed something that took garble output like:

45.222774, -92.767997 / Sun Apr 10 18:57:32 2005

and turned it into GPX statements like:

<trkpt lat="45.222774" lon="-92.767997"><time>2005-04-10T18:57:32Z</time></trkpt>

this Perl snippet I wrote:

#!/bin/perl -w

use strict;

use Date::Parse;

use Date::Format;

while (<>) {

chomp;

unless (/(\S+), (\S+) \/ (.*)/) {

print STDERR "Unparseable line: '$_'\n";

next;

}

my $when = str2time($3);

my $time = time2str('%Y-%m-%dT%H:%M:%SZ', $when);

print qq|<trkpt lat="$1" lon="$2"><time>$time</time></trkpt>\n|;

}

does the job.

Approval Voting for the ACM

Approval voting is an alternate voting system that has many benefits as compared to Instant Run-Off Voting. For years I've been running the on-line officer elections for the local campus chapter of the Association of Computing Machinery. Last year I talked them into switching to approval voting (even though it probably violates their charter), and it worked really well. Their elections have kicked off again, and once again I'm hosting them and using my voting script.

SwarmStream Article on the O'Reilly Network

I wrote an article that got posted on the O'Reilly Network. It sounds a little more huckster-ish than I'd like, but the tech does get explained pretty well. There's a link to the new beta 2 release of SwarmStream Public Edition at the bottom of the article.

Detecting Recently Used Words On the Fly

When writing I frequently find myself searching backward, either visually or using a reverse-find, to see if I've previously used the word that I've just used. Some words, say furthermore for example, just can't show up more than once per paragraph or two without looking overused.

I was thinking that if my editor/word-processor had a feature wherein all instances of the word I just typed were briefly highlighted it would allow me to notice awkward repeats without having to actively watch for them. Nothing terribly intrusive, mind you, but just a quick flicker of highlight while I type.

A little time spent figuring out key bindings in vim, my editor of choice, left me with this ugly looking command:

inoremap <space> <esc>"zyiw:let @/=@z<cr>`.a<space>

As a proof-of-concept, it works pretty much exactly how I described, though it breaks down a bit around punctuation and double spaces. I'm sure someone with stronger vim-fu could iron out the kinks, but it's good enough for me for now. Here's a mini-screenshot of the highlighting in action.

I'm sure the makers of real word processors, like open office, could add such a feature without much work, but maybe no one but me would ever use it.

Obscuring MoinMoin Wiki Referrers

When you click on a link in your browser to go to a new web page your browser sends along a Referrer: header, which tells the owner of the site that's been linked to the URL of the site where the link was found. It's a nice little feature that helps website creators know who is linking to them. Referrer headers are easily faked or disabled, but in general most people don't bother, because there's generally no harm in telling a website owner who told you about their site.

However, there are cases where you don't want the owner of the link target to know who has linked to them. We've run into one of these where I work because one of our internal websites is a wiki. One feature of wikis is that the URLs tend to be very descriptive. Pages leaving addresses like http://wiki.internal/ProspectivePartners/ in the Referrer: header might give away more information than we want showing up in someone else's logs.

The usual way to muffle the outbound referrer information from the linking website is to route the user's browser through a redirect. I installed a simple redirect script and figured out I could get MoinMoin, our wiki software of choice, to route all external links through it by inserting this into the moin_config.py file:

url_mappings = {

'http://': 'http://internal/redirect/?http://',

'https://': 'http://interal/redirect/?https://'

}

Now the targets of the links in our internal wiki only see '-' in their referrer logs, and no code changes were necessary.

Comments

I'm working on installing a some what sensitive wiki, so this is interesting.

How does the redirect script remove the referer, though? I couldn't figure that out from the script.

It just does due to the nature of the Referrer: header. When going a GET a browser provides the name of the page where the clicked link was found. When a link on page A points to a redirection script, B, then the browser tells B that the referrer was A. Then the redirect script, B, tells the browser to go to page C -- redirects it. When the browser goes to page C, the real target page, it doesn't send a Referrer: header because it's not following a link -- it's following a redirect. So the site owner of C never sees page A in the redirect logs. S/he doesn't see the address of B in those logs either, because browsers just don't send a Referrer header: at all on redirects. -- Ry4an

There was a little more talk about this on the moin moin general mailing list, including my proposal for adding redirect-driven masking as a configurable moin option. -- Ry4an

SwarmStream Public Edition

My latest project for Onion Networks has just been released: it's a first beta release of SwarmStream Public Edition, a completely free Java protocol handler plug-in that transparently augments any HTTP data transfer with caching, automatic fail-over, automatic resume, and wide-area file transfer acceleration.

SwarmStream Public Edition is a scaled-down version of our commercially-licensable SwarmStream SDK. Both systems are designed to provide networked applications with high levels of reliability and performance by combining commodity servers and cheap bandwidth with intelligent networking software.

Using SwarmStream Public Edition couldn't be easier, you just set a property that adds its package as a Java protocol handler like so:

java -Djava.protocol.handler.pkgs=com.onionnetworks.sspe.protocol you.main.Class

So, if you're doing any sort of HTTP data transfer in your Java application, there is no reason not to download SSPE and try it out with your application. There are no code changes required at all.

Better Random Subject Lines

Earlier I talked about generating random Subject lines for emails. I settled on something that looked like Subject: Your email (1024) . Those were fine, but got dull quickly. By switching the procmail rules to look like:

:0 fhw * ^Subject:[\ ]*$ |formail -i "Subject: RANDOM: $(fortune -n 65 -s | perl -pe 's/\s+/ /g')" :0 fhw * !^Subject: |formail -i "Subject: RANDOM: $(fortune -n 65 -s | perl -pe 's/\s+/ /g')"

I'm now able to get random subject lines with a little more meat to them. They come out looking like: RANDOM: The coast was clear. -- Lope de Vega

However, given that the default fortune data files only provide 3371 sayings that are 65 characters or under the Birthday_paradox will cause a subject collision a lot sooner than with the 2:superscript:15 possible subject lines I had before.

Update: It's been a few weeks since I've had this in place and my principle subject-less correspondent has noticed how eerily often the random subject lines match the topic of the email.

Jetty with Large File Support

Jetty is a great Java servlet container and web server. It's fully embeddable and at OnionNetworks we've used it in many of our products. It, however, has the same 2GiB file size limit that a lot of software does. This limit comes from using a 32 bit wide value to store file size yeilding a 4GiB (unsigned) or 2GiB (signed) maximum, and represents a real design gaff on the part of the developers.

Here at OnionNetworks we needed that limit eliminated so last year I twiddled the fields and modified the accessors wherever necessary. After initially offering a fix and eventually posting the fix it looks like Greg is getting ready to include it thanks in part to external pressure. Now if only Sun would fix the root of the problem.

Adding a Subject with Procmail

Lately I've been corresponding a great deal with someone who doesn't elect to use the Subject: line in emails. When responding to this emails my mail application, mutt, uses the Subject line: re: your mail. Mutt also groups conversations into threads using (among other things) the Subject line. So every reply to every person who has sent a message with a blank subject line gets grouped into a single thread when they, in fact, have nothing to do with one another.

I decided to create Subject lines for incoming messages without them on the fly using the standard procmail tool. This pair of recipes does the trick:

:0 fhw * ^Subject:[\ ]*$ |formail -i "Subject: Your email ($$)" :0 fhw * !^Subject: |formail -i "Subject: Your email ($$)"

The $$ gets turned into a low number (the process id actually) which is unique enough to keep threads separate. The resulting Subject lines look like: Subject: Your email (1024) } and have been working quite well.

There's probably a way to use a single recipe to catch both cases (blank Subject and no Subject), but I hate procmail's almost egrep and just settled on this.

Comments

Test comment.

The girl who refuses to use subject lines is leaving a comment. -- Kate Bauer

I updated this idea in a later entry. -- Ry4an

New UnBlog System

I've switched from a mailing list driven system to a wiki based one for this UnBlog. It's less weird than the mailing list setup was, but it's not exactly moveable type either. It offers RSS feeds and subscriptions, though through entirely different mechanisms than the list did. I think I've moved everything over well enough that there are no dead links into the old space. I ended up using my WikiChump thing modified to handle attachments and create comment pages to populate the data.

AntFlow 1.0rc1 Released

I code for a hobby and a profession, but usually it's only the hobby stuff I can release here. However I'm happy to say and proud to announce that Onion Networks, my employer, has okay-ed the release of AntFlow, a tool I largely wrote.

AntFlow adds hot folder triggers and workflow functionality to the ever popular Ant build tool. It's a great fit and a right good bit of code, so check it out at http://antflow.onionnetworks.com/ .

Silly Defensive Prompt Coloring

I don't like color in my command line windows. Colorization in ls's directory listings drives me bonkers; it's the first thing I turn off on a new system. I have, however, relented and added a little bit of conditional color to save me from an all too frequent error.

I have access to a lot of UNIX and UNIX-like systems. Some are machines I run, some are my employer's, and some belong to customers. Most all of them I've never physically seen but instead access through remote ssh, secure shell, connections. My normal command line prompt on these machines looks like:

[user@host ~]$

That says I'm on machine 'host' and logged in as 'user'. You'd think that would be enough to alert me when 'host' isn't my normal desktop machine or when I'm logged in as someone other than 'ry4an', but you'd be wrong. After issuing disastrous rm * commands when I didn't notice I was root and after one too many 'shutdown -h' when I didn't realize I was issuing commands to a server in a remote data-center instead of the computer in my closet, I finally wised up and did something about it.

Now when I'm logged into a remote machine the at-sign in my prompt is black-on-white instead of its normal white-on-back. The 'host' portion of each of my prompts is color coded to reflect which machine I'm logged in to, and when the 'user' portion says 'root' it's got a bright red background to let me know that commands are at their most dangerous.

None of this was hard to do, it was all just stuff I finally decided to do. I've attached the shell snippet I put in my /etc/bashrc to do the colorization of the three different parts. The local/remote detection probably only work with the gnu tool chain, but one never knows.

Dumbing Down scp

The tool scp, UNIX for secure copy, is a wrapper around ssh, secure shell. It lets you move files from one machine to another through an encrypted connection with a command line syntax similar to that of the standard cp, local copy, command. I use it 100 times a day.

The command line syntax for scp is at its most basic:

scp <source> <destination>

Either the source, destination, or both can be on a remote computer. To denote that one just prefixes the file name with "username@machinename:". So this command:

scp myfile ry4an@elsewhere:/home/ry4an/myfile

would copy the contents of the local file named 'myfile' to a remote system named 'elsewhere' and place it in the directory /home/ry4an with the name myfile.

As a shortcut one can omit the destination directory and filename, in which case they default to the home directory of the specified user and the same filename as the original. Nice, simple, handy, straightforward.

However, when you're an idiot in a rush like me in addition to skipping the destination directory and filename you also skip the colon, yielding a command like:

scp myfile ry4an@elsewhere

When you make that mistake scp decides that what you wanted to do was take the local file named 'myfile' and copy it to another local file named 'ry4an@elsewhere'. That's certainly consistent with their syntax -- no colon, no remote system, but it is never what I want.

Instead I go to the 'elsewhere' machine and start looking for 'myfile' and it's not there, and I'm very puzzled because scp didn't give me any error messages. I don't know of anyone who has ever wanted scp to do a local copy, but for some reason the scp developers took extra time to add that feature. I want to remove it.

The best way would be to edit the scp source and neuter the part that does local copies. The problem with that is I'd have to modify the scp program on lots of machines, many of which I have no control over.

So, as a cheesy work around I spent a few seconds writing a bash shell function that refuses to invoke scp if there is no colon in the command line. It's a crappy band-aid on what's definitely a user-error problem, but it's already saved me from making the same frustrating mistake 10 times since I wrote it last week. Here it is in case anyone else has the same un-problem I do:

function scp () { if echo $* | grep -v -q -s : ; then

echo scp: missing colon /usr/bin/scp

else

/usr/bin/scp $@

fi